For years, I relied on cloud-based assistants like ChatGPT and Claude for fast, accurate answers. But after running local language models on my gaming PC (powered by a Radeon 7900 XTX), I discovered unexpected advantages. Benchmarks don't always tell the real story, and in some areas, a local setup outshines the cloud. Here's what I learned in a candid Q&A.

Why did you decide to replace ChatGPT with a local model?

I've been experimenting with local LLMs for a while, mostly for fun and to understand their limits. My gaming PC has a Radeon 7900 XTX, which is powerful enough to run models like Llama 2 or Mistral. Initially, I kept using cloud services for quick queries because local models felt slower or less accurate. But as open-source models improved, I wanted to see if a local setup could handle my daily needs—privacy, speed, and customization. The cloud has a quality gap, but local models are closing it fast. I decided to switch for a month to test real-world performance, not just benchmarks.

How does local model performance compare to cloud services for simple queries?

For simple, factual questions—like “What's the capital of France?” or “Summarize this article”—the local model now matches cloud counterparts. Surprisingly, it often responds faster because there's no network latency. My local setup loads the model into VRAM (24GB on the 7900 XTX) and processes requests instantly. Cloud services, even with fast APIs, add a round trip of 100–300ms. For quick answers, that difference matters. However, for complex reasoning or multi-turn conversations, cloud models still hold an edge—but not by as much as a year ago. The quality gap has shrunk dramatically, especially with fine-tuned local models.

What surprised you most about running an LLM locally?

The biggest surprise was context awareness. I expected local models to struggle with long conversations or remembering details, but some (like Llama 2 70B) handle context windows of 4k tokens well. Cloud services like ChatGPT excel at maintaining coherent threads, but local models can be optimized with better prompts. Another shock: privacy. With a local model, no data leaves my PC. That's a huge win for sensitive queries. Also, I can customize the model—adjust temperature, top-p, or even fine-tune it on my own data. Cloud services lock you into their settings. These unexpected advantages made the switch worthwhile.

Where does the local model beat the cloud that you didn't expect?

Two areas stood out: offline reliability and latency for repetitive tasks. If my internet drops, cloud services are useless, but my local model keeps running. For tasks like generating code snippets or rewriting paragraphs, I can blast through dozens of requests without worrying about rate limits or API costs. The cloud often throttles you or charges per token. Locally, it's a one-time power cost. I also found local models are better at following strict formatting rules because I can tweak system prompts without restrictions. Cloud models sometimes refuse or alter formatting due to safety constraints.

Are there any downsides to using a local LLM over ChatGPT?

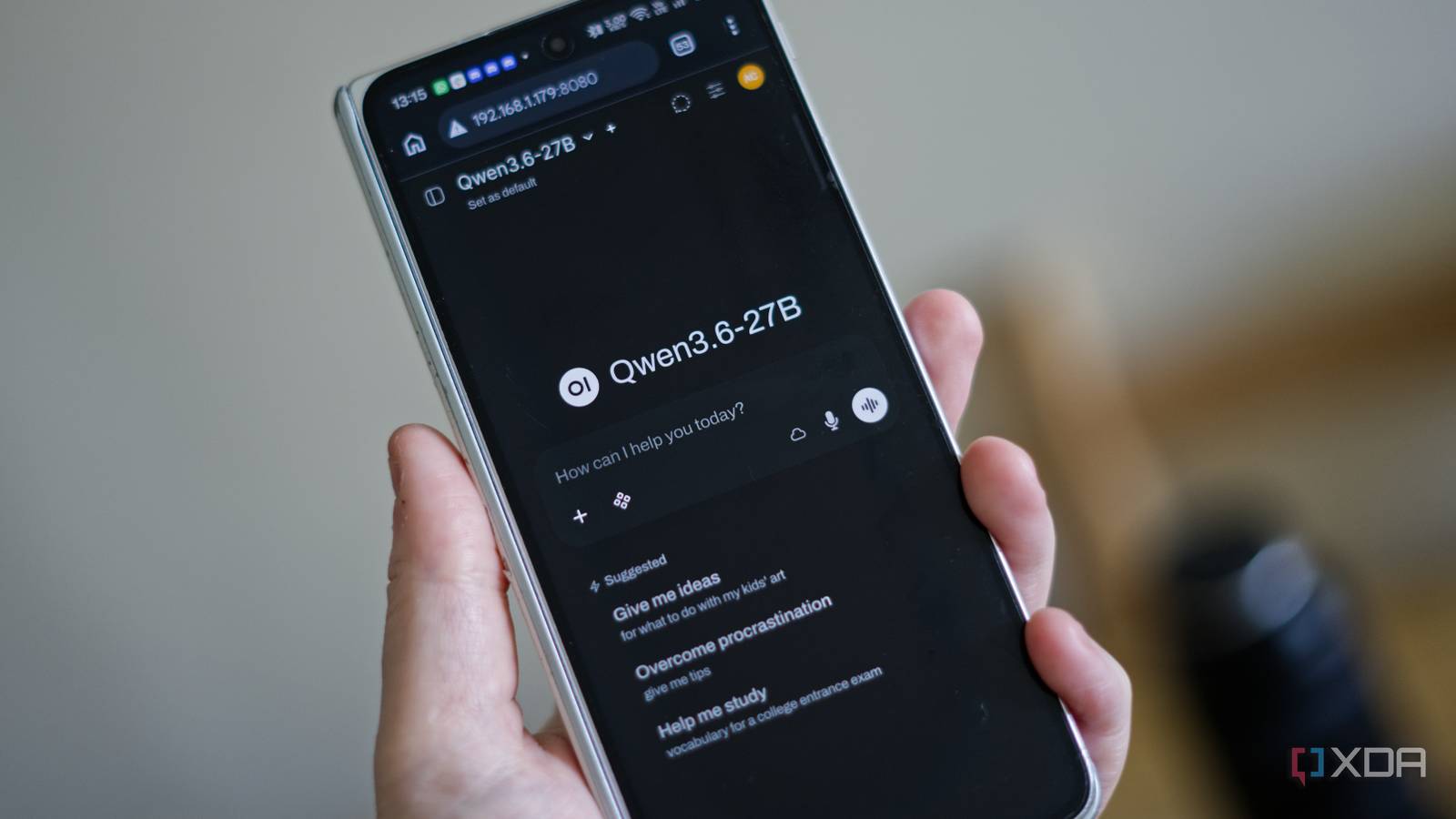

Definitely. Setup is technical—you need a powerful GPU, VRAM management, and familiarity with tools like Ollama or LM Studio. Model selection can be overwhelming, and not all models are optimized for your hardware. Cloud services are plug-and-play. Also, for very large models (70B+ parameters), my gaming PC's 24GB VRAM isn't enough; I have to use quantized versions, which lose some accuracy. Cloud models like GPT-4 are still superior for nuanced creative writing or deep reasoning. And updates happen automatically in the cloud—locally, you have to manually download new model versions. So it's a trade-off, not a win-win.

How do you decide which tasks to use local vs cloud?

I now follow a simple rule: local for speed and privacy, cloud for complexity. For quick answers, code fixes, or summarizing personal documents, I use my local model. It's fast, free after the hardware cost, and keeps my data private. For brainstorming, long-form articles, or tasks needing the latest knowledge (like web search), I fall back to ChatGPT or Claude. Cloud models have better knowledge cutoffs and can access plugins. I also use local for testing prompts before deploying them to cloud APIs—saves money. It's a hybrid approach that leverages the best of both worlds.

Would you recommend others switch to a local LLM on a gaming PC?

Only if you're comfortable with tech setup and have a decent GPU (recommend 12GB+ VRAM). For casual users, cloud services are easier. But if you value privacy, want to avoid subscription fees, or need offline access, it's worth trying. Start with a 7B parameter model—it runs on most gaming GPUs. The community is active, and models improve monthly. I've been surprised how far local LLMs have come. They won't replace GPT-4 entirely yet, but for many everyday tasks, they're more than adequate. And beating the cloud in unexpected places—like latency and control—makes the switch genuinely rewarding.